Documentation Index

Fetch the complete documentation index at: https://buttercms.com/docs/llms.txt

Use this file to discover all available pages before exploring further.

Overview

Why real-time sync matters

Without webhooks, you would need to:- Poll the ButterCMS API repeatedly for changes

- Risk stale content in external systems

- Waste resources checking for updates that don’t exist

- Accept delays between content changes and system updates

- Changes trigger immediate updates

- External systems stay current

- Resources are used efficiently

- Users see fresh content faster

Synchronization architecture

Search index synchronization

Keep your search engine updated whenever content changes in ButterCMS.Algolia integration

Algolia is a popular search-as-a-service platform. Here’s how to keep your Algolia index synced:const algoliasearch = require('algoliasearch');

const Butter = require('buttercms');

const algolia = algoliasearch('YOUR_APP_ID', 'YOUR_ADMIN_KEY');

const butter = Butter('YOUR_API_TOKEN');

// Index names for different content types

const indices = {

pages: algolia.initIndex('pages'),

posts: algolia.initIndex('posts'),

products: algolia.initIndex('products')

};

app.post('/webhooks/buttercms', async (req, res) => {

const { data, webhook } = req.body;

const [contentType, action] = webhook.event.split('.');

try {

switch (action) {

case 'published':

await syncToAlgolia(contentType, data);

break;

case 'unpublished':

case 'delete':

await removeFromAlgolia(contentType, data);

break;

}

res.status(200).json({ synced: true });

} catch (error) {

console.error('Algolia sync failed:', error);

res.status(500).json({ error: 'Sync failed' });

}

});

async function syncToAlgolia(contentType, data) {

let record;

switch (contentType) {

case 'page':

// Fetch full page data

const pageResponse = await butter.page.retrieve(data.page_type, data.id);

const page = pageResponse.data.data;

record = {

objectID: `page-${data.id}`,

type: 'page',

title: page.fields.title || data.name,

slug: data.id,

content: extractSearchableText(page.fields),

pageType: data.page_type,

updatedAt: data.updated

};

await indices.pages.saveObject(record);

break;

case 'post':

// Fetch full post data

const postResponse = await butter.post.retrieve(data.id);

const post = postResponse.data.data;

record = {

objectID: `post-${data.id}`,

type: 'post',

title: post.title,

slug: post.slug,

summary: post.summary,

content: stripHtml(post.body),

author: post.author?.first_name + ' ' + post.author?.last_name,

categories: post.categories?.map(c => c.name) || [],

tags: post.tags?.map(t => t.name) || [],

publishedAt: post.published,

updatedAt: data.timestamp

};

await indices.posts.saveObject(record);

break;

case 'collectionitem':

// Fetch collection item data

const collectionResponse = await butter.content.retrieve([data.id]);

const items = collectionResponse.data.data[data.id];

const item = items.find(i => i.meta.id.toString() === extractItemId(data.itemid));

if (item) {

record = {

objectID: `${data.id}-${item.meta.id}`,

type: 'collection',

collection: data.id,

...item // Include all collection fields

};

await indices.products.saveObject(record);

}

break;

}

console.log(`Synced ${contentType} to Algolia:`, data.id);

}

async function removeFromAlgolia(contentType, data) {

switch (contentType) {

case 'page':

await indices.pages.deleteObject(`page-${data.id}`);

break;

case 'post':

await indices.posts.deleteObject(`post-${data.id}`);

break;

case 'collectionitem':

await indices.products.deleteObject(

`${data.id}-${extractItemId(data.itemid)}`

);

break;

}

console.log(`Removed ${contentType} from Algolia:`, data.id);

}

// Helper functions

function extractSearchableText(fields) {

// Recursively extract text from all field values

return Object.values(fields)

.map(value => {

if (typeof value === 'string') return stripHtml(value);

if (typeof value === 'object') return extractSearchableText(value);

return '';

})

.join(' ');

}

function stripHtml(html) {

return html.replace(/<[^>]*>/g, ' ').replace(/\s+/g, ' ').trim();

}

function extractItemId(itemid) {

const match = itemid.match(/\[_id=(\d+)\]/);

return match ? match[1] : null;

}

Elasticsearch integration

const { Client } = require('@elastic/elasticsearch');

const elastic = new Client({ node: 'http://localhost:9200' });

async function syncToElasticsearch(contentType, data, fullContent) {

const indexName = `buttercms-${contentType}s`;

await elastic.index({

index: indexName,

id: data.id,

document: {

title: fullContent.title || data.name,

content: fullContent.body || JSON.stringify(fullContent.fields),

slug: data.id,

type: contentType,

locale: data.locale,

updatedAt: new Date(data.updated)

}

});

}

async function removeFromElasticsearch(contentType, data) {

const indexName = `buttercms-${contentType}s`;

await elastic.delete({

index: indexName,

id: data.id

});

}

Database synchronization

Keep a local database in sync for analytics, reporting, or backup purposes:PostgreSQL sync

const { Pool } = require('pg');

const pool = new Pool();

app.post('/webhooks/buttercms', async (req, res) => {

const { data, webhook } = req.body;

const client = await pool.connect();

try {

await client.query('BEGIN');

switch (webhook.event) {

case 'page.published':

await syncPage(client, data);

break;

case 'page.delete':

await deletePage(client, data);

break;

case 'post.published':

await syncPost(client, data);

break;

case 'post.delete':

await deletePost(client, data);

break;

}

await client.query('COMMIT');

res.status(200).json({ synced: true });

} catch (error) {

await client.query('ROLLBACK');

console.error('Database sync failed:', error);

res.status(500).json({ error: 'Sync failed' });

} finally {

client.release();

}

});

async function syncPage(client, data) {

await client.query(`

INSERT INTO pages (slug, page_type, name, status, locale, updated_at, published_at)

VALUES ($1, $2, $3, $4, $5, $6, $7)

ON CONFLICT (slug, COALESCE(locale, ''))

DO UPDATE SET

name = EXCLUDED.name,

status = EXCLUDED.status,

updated_at = EXCLUDED.updated_at,

published_at = EXCLUDED.published_at

`, [

data.id,

data.page_type,

data.name,

data.status,

data.locale,

data.updated,

data.published

]);

}

async function deletePage(client, data) {

await client.query(`

DELETE FROM pages WHERE slug = $1 AND locale IS NOT DISTINCT FROM $2

`, [data.id, data.locale]);

}

Mobile app push notifications

Notify mobile app users when new content is published:Firebase Cloud Messaging

const admin = require('firebase-admin');

admin.initializeApp();

app.post('/webhooks/buttercms', async (req, res) => {

const { data, webhook } = req.body;

if (webhook.event === 'post.published') {

// Fetch full post for notification content

const postResponse = await butter.post.retrieve(data.id);

const post = postResponse.data.data;

// Send push notification to all subscribed users

await admin.messaging().sendToTopic('blog-updates', {

notification: {

title: 'New Blog Post!',

body: post.title,

imageUrl: post.featured_image

},

data: {

type: 'blog_post',

slug: post.slug,

url: `https://yoursite.com/blog/${post.slug}`

}

});

console.log('Push notification sent for:', post.title);

}

res.status(200).json({ notified: true });

});

Apple Push Notification Service (APNs)

const apn = require('apn');

const apnProvider = new apn.Provider({

token: {

key: 'path/to/APNsAuthKey.p8',

keyId: 'KEY_ID',

teamId: 'TEAM_ID'

},

production: process.env.NODE_ENV === 'production'

});

async function sendApnNotification(deviceTokens, post) {

const notification = new apn.Notification();

notification.expiry = Math.floor(Date.now() / 1000) + 3600;

notification.badge = 1;

notification.sound = 'ping.aiff';

notification.alert = {

title: 'New Content',

body: post.title

};

notification.payload = {

type: 'post',

slug: post.slug

};

notification.topic = 'com.yourapp.bundle';

await apnProvider.send(notification, deviceTokens);

}

Email marketing integration

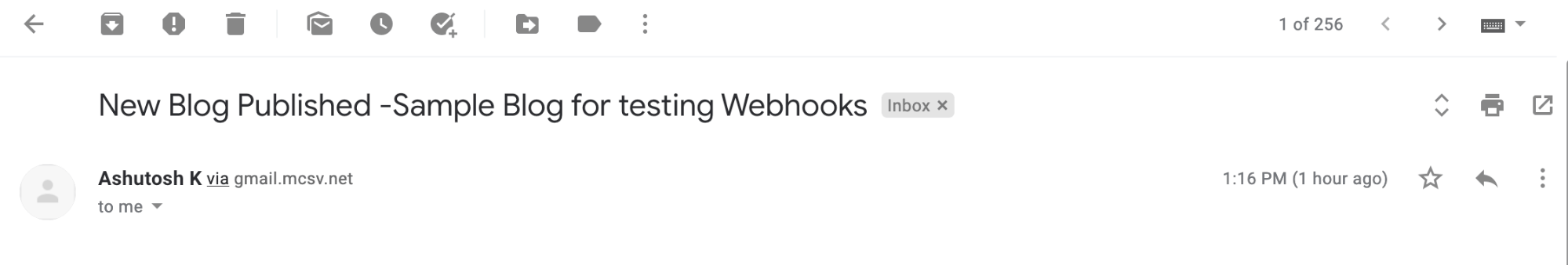

Mailchimp integration

Automatically create email campaigns when content is published:const client = require("@mailchimp/mailchimp_marketing");

client.setConfig({

apiKey: process.env.MAILCHIMP_API_KEY,

server: process.env.MAILCHIMP_SERVER_PREFIX,

});

app.post('/webhooks/buttercms', async (req, res) => {

const { data, webhook } = req.body;

if (webhook.event === 'post.published') {

// Fetch full post data

const postResponse = await butter.post.retrieve(data.id);

const post = postResponse.data.data;

// Replicate a template campaign

const replicateCampaign = await client.campaigns.replicate(

process.env.MAILCHIMP_TEMPLATE_CAMPAIGN_ID

);

// Update campaign with new post data

await client.campaigns.update(replicateCampaign.id, {

settings: {

subject_line: 'New Blog Published - ' + post.title,

title: 'New Blog Published - ' + post.title

}

});

// Send the campaign

await client.campaigns.send(replicateCampaign.id);

console.log('Mailchimp campaign sent for:', post.title);

}

res.status(200).json({ emailed: true });

});

Social media synchronization

Automatically share new content on social platforms:Twitter/X integration

const { TwitterApi } = require('twitter-api-v2');

const twitter = new TwitterApi({

appKey: process.env.TWITTER_APP_KEY,

appSecret: process.env.TWITTER_APP_SECRET,

accessToken: process.env.TWITTER_ACCESS_TOKEN,

accessSecret: process.env.TWITTER_ACCESS_SECRET,

});

app.post('/webhooks/buttercms', async (req, res) => {

const { data, webhook } = req.body;

if (webhook.event === 'post.published') {

const postResponse = await butter.post.retrieve(data.id);

const post = postResponse.data.data;

const tweetText = `📝 New blog post: ${post.title}\n\n` +

`${post.summary.substring(0, 200)}...\n\n` +

`Read more: https://yoursite.com/blog/${post.slug}`;

await twitter.v2.tweet(tweetText);

console.log('Tweeted:', post.title);

}

res.status(200).json({ shared: true });

});

LinkedIn integration

const axios = require('axios');

async function shareOnLinkedIn(post) {

const accessToken = process.env.LINKEDIN_ACCESS_TOKEN;

const personUrn = process.env.LINKEDIN_PERSON_URN;

await axios.post(

'https://api.linkedin.com/v2/ugcPosts',

{

author: personUrn,

lifecycleState: 'PUBLISHED',

specificContent: {

'com.linkedin.ugc.ShareContent': {

shareCommentary: {

text: `New blog post: ${post.title}\n\n${post.summary}`

},

shareMediaCategory: 'ARTICLE',

media: [{

status: 'READY',

originalUrl: `https://yoursite.com/blog/${post.slug}`,

title: { text: post.title },

description: { text: post.summary }

}]

}

},

visibility: {

'com.linkedin.ugc.MemberNetworkVisibility': 'PUBLIC'

}

},

{

headers: {

'Authorization': `Bearer ${accessToken}`,

'Content-Type': 'application/json'

}

}

);

}

Best practices

Synchronization patterns

| Pattern | Use Case | Implementation |

|---|---|---|

| Immediate sync | Search indexes, caches | Process directly in webhook handler |

| Queued sync | Email, social, analytics | Add to job queue, process async |

| Batch sync | Database mirrors | Accumulate changes, sync periodically |

| Event sourcing | Audit logs, replay | Store all events, rebuild state as needed |

Error recovery

Always plan for sync failures:async function syncWithRetry(operation, maxRetries = 3) {

for (let attempt = 1; attempt <= maxRetries; attempt++) {

try {

return await operation();

} catch (error) {

console.error(`Sync attempt ${attempt} failed:`, error);

if (attempt === maxRetries) {

// Queue for manual review

await deadLetterQueue.add({

operation: operation.name,

error: error.message,

timestamp: new Date()

});

throw error;

}

// Wait before retry (exponential backoff)

await sleep(Math.pow(2, attempt) * 1000);

}

}

}