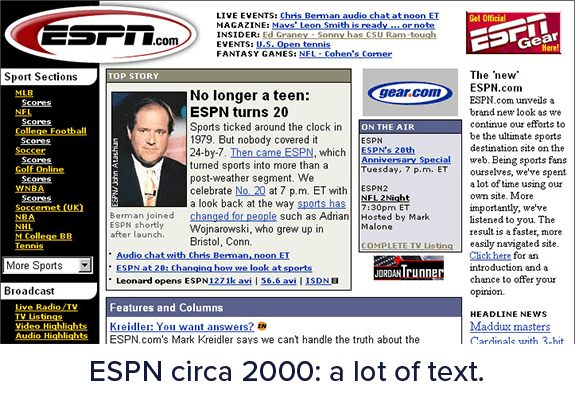

Over the years, the size of web applications has grown considerably larger with the arrival of multimedia and rich graphics. This has been made possible with the use of numerous supporting libraries and stylesheets.

Modern user interfaces not only load large amounts of resources but also load large amounts of data, including data from API-based CMS systems. Downloading these resources and data each time is time-consuming and also costly.

This is precisely where web and API caching strategies come to the rescue. Caching helps in reducing these calls while maintaining the quality of output.

Caching means to store resources or data once retrieved as cache. Once stored, the browser/API client can get the data from the cache. This means the server will not have to process or retrieve the data repeatedly. Consequently, it results in a reduced load on the server.

Caching does seem like a simple concept - Store the data and retrieve it from the cache when needed again. However, it can become complex when implemented. Caching strategies should be understood and applied carefully. In this article, we will try to capture all you need to know about caching and various caching strategies.

Now that we have an understanding of what caching is, let us understand the various caching strategies that exist. Each resource being cached needs to have the right caching strategies associated with it to ensure it does not disrupt the functionality of the application. Further in this article, we will discuss the different variations and go deeper into them to explain how each of the caching strategies is unique in its own way.

Browser level caching means to store the resources and API responses at the user level. This enables the web application to load considerably faster compared to a fresh load. Browser level caching utilizes the browser’s local data store and disk space to save resources. The browser also maintains an index of these resources along with their expiry time. The expiry time is the duration until the cache is considered valid. Depending on how often you change your resources, these expiry times may differ.

The browser is capable of caching not only JS or CSS files, but also images, API responses, and full web pages depending on how the website you visit is configured.

Benefits

Drawbacks

Templating of web pages has become a common approach towards web development today. The object level caching strategy involves the caching of pre-processed pages at the server level along with the data. This helps in preventing frequent database calls as well as page processing calls. An object basically can be any resource, data, or web page. Object level caching is more beneficial when the web application is expecting more new users on a regular basis.

Benefits

Drawbacks

Network level caching is a modern caching methodology where your intermediate HTTP web server and routers are configured to cache the API calls and resources. In this method of caching, we configure the network layer to hold the responses and resources for corresponding URLs. This type of caching works strictly based on URL. The network layer can be configured to use or ignore query parameters for the URLs as needed by your application.

Network layer caching improves the overall performance by completely avoiding the calls for a response. Thus, it results in better performance compared to object level caching. In certain setups, the users would be hitting a regional network layer closer to their location. This can improve the response time considerably as a roundtrip to the servers would be saved.

Benefits

Drawbacks

This is the best caching method in use at present. Third-party caching involves routing resource-intensive calls through third-party cache providers. These cache providers are then responsible to hold, manage, and clean the cache as needed. Moreover, the cache stores also provide better transparency into what the current cache is holding and allows you to clear the cache when desired.

With third-party caching, you can also make sure that the same cache is served around the world irrespective of country or region. Thus, they also improve the website performance in turn by cascading data to locations closer to the user.

Benefits

Drawbacks

From the above, we understand that caching is surely a must for the majority of web applications. Below are some high-level advantages of implementing caching:

To get the right benefits from each caching mechanism, we need to understand the concepts associated with it in depth. These concepts are discussed further in the next section.

A cache is a store of files that were processed in the past and will be used in the future. These files may not remain the same throughout their lifetime. There could be scenarios where the file might get changed. In such cases, cache expiry becomes important.

Cache expiry is the time before which the cache is considered valid. When the request for files is sent to the server, the server returns the relevant headers to specify the expiry time for the respective file. The caching engine (browser, third-party, or network layer) maintains this expiry for each file and takes care of refreshing it on time. Consequently, the stale cache issue will cease to exist. When analyzing the impact of different caching strategies, it is important to ensure that there is an option for configuring a custom cache expiry.

Caching, although beneficial, still needs to be done carefully. You need to understand the files that are important to be cached. At the same time, you also need to carefully decide on the expiry time for the cache. Extreme caching is the situation where the web application servers cache every possible file with a high expiration time.

Extreme caching could end up resulting in improper performance of the web application. Stale JS or CSS files or data from API calls could result in unexpected behavior, too. Hence, it is important to carefully cache the resources and at the same time refresh the cache whenever updates are being made. The next section helps you carefully strategize caching for each resource.

Caching static resources against caching API calls requires two different caching strategies. Each strategy focuses on caching different types of data. Before strategizing for the same, you need to segregate the resources and API calls into the categories below:

The segregation of resources and API calls will help in finalizing the approach to be followed for each. Then, try to organize the static resources into a separate folder to treat them differently. Now, let us try to understand the methods to be followed for each.

The lifetime of static resources is normally high. These resources generally include the libraries and files that are brought in from a third party. For instance, this includes Bootstrap, JQuery, Google Fonts, Font Awesome, and others. These files should be cached for longer with expiration times ranging from 1 month to 3 months. The majority of people prefer to use a third-party CDN to host these libraries. However, it is not advisable to do so.

Caching of static resources will reduce the resource calls to the server considerably. These files can be cached using web browser caching. The browser can hold and manage the lifetime of these scripts. Thus, when your web application URL is entered, the browser checks all the requests that the web page makes. Based on the available cache, it pulls out the files from the disk cache.

The caching of static resources can combine browser caching and third-party caching for the best web application performance. This ensures that even for first-time caching for a new user, the web application is rarely hit.

API calls caching is an interesting development that has been done over the past few years. However, caching API calls is still a tricky thing to do. Using a matured platform to cache such API calls is certainly better.

An API-first CMS like ButterCMS allows you to cache your API calls for various entities like posts, testimonials, list of services, and others. API calls caching allows faster response times for the APIs which might otherwise take 1-2 seconds if the data is too large.

With global caching, ButterCMS could deliver data for API calls quickly with less load on the server. Consequently, it results in better performance and increased satisfaction for you as a client. With CMS platforms, you can leave the complete caching responsibility to the API provider. Thus, it not only reduces your development time but also gives better quality output.

These are the final set of resources that refresh more often and might need frequent cache cleaning to show the right data. Hence, the general advice for such resources is to ensure that it does not get cached at the user or server level. Any caching of dynamic data could impact the sanity of data visualized on the screen or consumed by a service.

Based on the detailed discussion above, we can summarize that caching surely is a must-do activity for every web application owner. With caching, you get numerous benefits which in turn lead to a satisfactory visitor experience. However, choosing the right one or mixing all caching strategies proportionally is a crucial activity and must be done with proper knowledge.

With the right strategy and resources, you can definitely make your web application faster and optimized for use. For more information about optimizing the performance of your web application, check out our Beginner's Guide to Front-End Performance.